Microsoft Mesh: A New Mixed Virtual Reality Platform

If you grew up watching Star Wars holograms---or if you've seen any of the myriad sci-fi movies where characters make use of holographic charts floating in front of them---you'll probably be impressed to see that this technology is becoming a reality. At the recent Ignite 2021 conference, we were introduced not only to security announcements but also to a new mixed reality platform: Microsoft Mesh. It's clear that the folks at Microsoft were excited to showcase the new possibilities that come with this technology. The keynote itself was delivered over Mesh to demonstrate how it can be used to create a world of virtual connection with futuristic imagery. Here's a look at what was presented.

How it Works

Microsoft Mesh is built in and for Azure, Microsoft's expansive cloud infrastructure. There are a number of technical tools that play a part in creating the world of Mesh. Object Anchors, now in preview, automatically aligns relevant 3D content with objects in the physical world. For example, employees putting machinery together could see a virtual layout of where elements should be placed. This allows for efficiency-boosting visuals without the need for physical labels or markers.

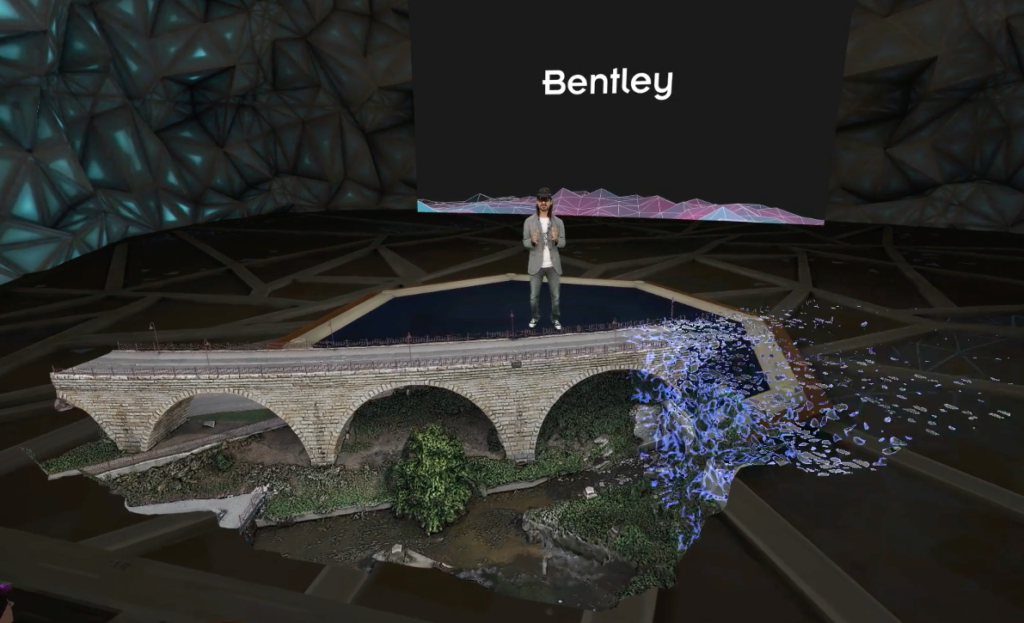

Azure Remote Rendering creates real-time, high-fidelity 3D models of structures in a virtual landscape. One of the methods of providing the blueprints for these models is through drone footage of the actual structures in the real world. This makes it possible to display the structure in front of you when you aren't able to physically visit it, and the detail is true-to-life enough to do things like inspection and physical analysis virtually.

These capabilities rely on a medium through which to view the 3D renderings, such as the HoloLens 2, Oculus, or Windows Virtual Reality headsets. But the visuals can also be seen on PCs, Macs, or mobile devices.

When it comes to virtual representation of humans, the capabilities of Microsoft Mesh arise out of years of research with the HoloLens, hand & eye tracking, and AI. The general expectation right now is for users to be able to initially represent themselves through avatars, and then eventually project photorealistic versions of themselves holographically.

Putting Mesh to Use

One example of how this kind of technology can be used is to allow people to virtually attend performances and events. The innovation group Lune Rouge (based in Quebec and founded by Guy Laliberté, who also co-founded Cirque du Soleil), has started the Hanai World Project to explore this goal. The aim is to digitally create entertainment venues in mixed reality for those who can't attend in person. According to Lune Rouge's executive director Alexandre Miasnikof, this could provide a complement to live performances and another layer to human interaction.

On a more scientific level, another example that is already putting virtual reality technology to work is OceanX. As a nonprofit dedicated to research and education, OceanX uses Microsoft Mesh to allow scientists to virtually experience the explorations of its deep sea vehicles. Those on the team can view 3D representations of vessel missions in real time and also access additional data tags on the subjects they're observing. They can use this information to guide the vessel's mission, potentially discovering things they wouldn't have otherwise found.

How to Explore Microsoft Mesh

These are just a couple of applications that can benefit from Microsoft Mesh, and of course Microsoft wants to invite collaborators to expand upon the possibilities. In the coming months, the Mesh platform will offer developers a complete set of tools to build their own virtual worlds, including avatar building, spatial rendering, user syncronization, and holoportation. In the meantime, you can get a closer glimpse by watching the Ignite 2021 keynote in which Technical Fellow for AI and Mixed Reality Alex Kipman demonstrates Microsoft Mesh (starting around 15 minutes in). Then keep an eye out for a growing number of projects and platforms that will be enabled with the technology, like applications that help with autism.

(Note: Although we think virtual reality technology is cool, we still recognize the importance of living fully in actual reality too. Read more about various realities here.)

Stay connected. Join the Infused Innovations email list!

Share this

You May Also Like

These Related Posts

Technology Highlights of 2021

Top 10 Announcements from Microsoft Ignite November 2021

No Comments Yet

Let us know what you think